-

Welcome to Overclockers Forums! Join us to reply in threads, receive reduced ads, and to customize your site experience!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

PC Build Help - Budget around $2000

- Thread starter DoctorRDG

- Start date

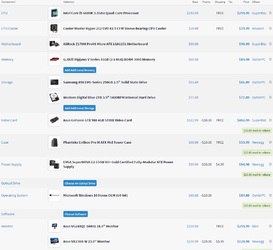

This is my list its a little bit over budget.

CPU

Intel Core i5-6600K 3.5GHz Quad-Core Processor

$253.99 FREE $253.99 Amazon

Buy

CPU Cooler

Cooler Master Hyper 212 EVO 82.9 CFM Sleeve Bearing CPU Cooler

$29.99 FREE $29.99 Amazon

Buy

Motherboard

Gigabyte GA-Z170N-Gaming 5 Mini ITX LGA1151 Motherboard

$149.99 FREE $149.99 B&H

Buy

Memory

G.Skill Ripjaws V Series 16GB (2 x 8GB) DDR4-3200 Memory

$114.99 FREE $114.99 Newegg

Buy

Add Additional Memory

Storage

Samsung 850 EVO-Series 500GB 2.5" Solid State Drive

$149.88 $149.88 OutletPC

Buy

Western Digital BLACK SERIES 2TB 3.5" 7200RPM Internal Hard Drive

$117.10 FREE $117.10 Amazon

Buy

Add Additional Storage

Video Card

EVGA GeForce GTX 980 Ti 6GB Superclocked+ ACX 2.0+ Video Card

$649.99 $649.99 SuperBiiz

Buy

Case

Cooler Master HAF 912 ATX Mid Tower Case

$53.99 $53.99 SuperBiiz

Buy

Power Supply

EVGA SuperNOVA GS 650W 80+ Gold Certified Fully-Modular ATX Power Supply

$89.99 -$20.00 $5.99 $75.98 Newegg

Buy

$20.00 mail-in rebate

Optical Drive Choose An Optical Drive

Operating System

Microsoft Windows 10 Home OEM (64-bit)

$99.88 -$10.00 $89.88 OutletPC

Buy

$10.00 mail-in rebate

Software Choose Software

Monitor

Samsung S24E650BW 60Hz 24.0" Monitor

$259.99 FREE $259.99 B&H

Buy

Samsung S24E650BW 60Hz 24.0" Monitor

$259.99 FREE $259.99 B&H

Buy

Creative Labs GigaWorks T20 Series II 28W 2ch Speakers

$69.99 FREE $69.99 Amazon

Base Total: $2299.76

Mail-in Rebates: -$30.00

Shipping: $5.99

Total: $2275.75

CPU

Intel Core i5-6600K 3.5GHz Quad-Core Processor

$253.99 FREE $253.99 Amazon

Buy

CPU Cooler

Cooler Master Hyper 212 EVO 82.9 CFM Sleeve Bearing CPU Cooler

$29.99 FREE $29.99 Amazon

Buy

Motherboard

Gigabyte GA-Z170N-Gaming 5 Mini ITX LGA1151 Motherboard

$149.99 FREE $149.99 B&H

Buy

Memory

G.Skill Ripjaws V Series 16GB (2 x 8GB) DDR4-3200 Memory

$114.99 FREE $114.99 Newegg

Buy

Add Additional Memory

Storage

Samsung 850 EVO-Series 500GB 2.5" Solid State Drive

$149.88 $149.88 OutletPC

Buy

Western Digital BLACK SERIES 2TB 3.5" 7200RPM Internal Hard Drive

$117.10 FREE $117.10 Amazon

Buy

Add Additional Storage

Video Card

EVGA GeForce GTX 980 Ti 6GB Superclocked+ ACX 2.0+ Video Card

$649.99 $649.99 SuperBiiz

Buy

Case

Cooler Master HAF 912 ATX Mid Tower Case

$53.99 $53.99 SuperBiiz

Buy

Power Supply

EVGA SuperNOVA GS 650W 80+ Gold Certified Fully-Modular ATX Power Supply

$89.99 -$20.00 $5.99 $75.98 Newegg

Buy

$20.00 mail-in rebate

Optical Drive Choose An Optical Drive

Operating System

Microsoft Windows 10 Home OEM (64-bit)

$99.88 -$10.00 $89.88 OutletPC

Buy

$10.00 mail-in rebate

Software Choose Software

Monitor

Samsung S24E650BW 60Hz 24.0" Monitor

$259.99 FREE $259.99 B&H

Buy

Samsung S24E650BW 60Hz 24.0" Monitor

$259.99 FREE $259.99 B&H

Buy

Creative Labs GigaWorks T20 Series II 28W 2ch Speakers

$69.99 FREE $69.99 Amazon

Base Total: $2299.76

Mail-in Rebates: -$30.00

Shipping: $5.99

Total: $2275.75

Last edited:

I'm not familiar with those monitors, but they seem overpriced. I don't see the point of spending more than $150 on a 1080P monitor (and I consider 1920x1200 in the same class as 1080P).

And a 980Ti is still way overkill for that resolution.

Ram and HDD are also more expensive than they need to be (see my build for cheaper alternatives). What's the point of 7200RPM HDD these days? If you have a lot of apps to install, grab a 500GB SSD. Just a few apps/games, get a 250GB. HDD should be for data storage only.

You also have an ITX mobo in an ATX case...and the case is fugly-gray and feature-poor.

Meh

And a 980Ti is still way overkill for that resolution.

Ram and HDD are also more expensive than they need to be (see my build for cheaper alternatives). What's the point of 7200RPM HDD these days? If you have a lot of apps to install, grab a 500GB SSD. Just a few apps/games, get a 250GB. HDD should be for data storage only.

You also have an ITX mobo in an ATX case...and the case is fugly-gray and feature-poor.

Meh

With two monitors it would be gaming side by side 3840x1200p I would pick the GTX 980 Ti for that and the future games are going to put graphics cards to shame.

PLS panel cost allot it is the newest technology. He could save allot with a IPS or even more with TN panel.

PLS panel cost allot it is the newest technology. He could save allot with a IPS or even more with TN panel.

Last edited:

- Thread Starter

- #26

All of these suggestions are great! For the most part, I'll be using a good monitor for gaming (Xbox/ps4/PC) and the other monitor for a web browser. I primarily play console gaming so keep that in mind. I still want the ability though to play PC games with good video quality and smooth gameplay.

My end goal is to have two monitors ( the good monitor being a BenQ/ASUS 24" 144hz and the normal monitor being the same brand except between 60-70hz) as stated as above with my consoles. Also, have a PC that I can game on occasionally and browse on. Not sure how big my desk will be, big enough to fit the monitors and consoles for sure. Logitech speakers seem to be the go to. Mouse and keyboard preference etc.

My end goal is to have two monitors ( the good monitor being a BenQ/ASUS 24" 144hz and the normal monitor being the same brand except between 60-70hz) as stated as above with my consoles. Also, have a PC that I can game on occasionally and browse on. Not sure how big my desk will be, big enough to fit the monitors and consoles for sure. Logitech speakers seem to be the go to. Mouse and keyboard preference etc.

http://pcpartpicker.com/p/6HQWHx

$1738 total, pick your own mouse and keyboard

If you'll be installing more than 3-4 games, you could bump the SSD up to 500GB for another ~$40, otherwise, I use a 250 and don't run into too many issues. I just keep game files copied to a external HDD and if I ever run out of room and need to swap in a different game, I just copy the game files to the Steam folder or what have you and only have to download any updates.

If you're looking to get into overclocking, you could spend another $50ish to upgrade to a nicer motherboard. The one I selected is the bare minimum mATX Z170 option. Will get you a basic OC, however. Gigabyte GA-Z170MX-Gaming 5, Asus Z170M-Plus, Asrock Z170M Extreme 4 would also be a step up feature-wise and the Asus Gene VIII would be the top dog in the mATX form factor (but $100 more).

Other upgrade options:

Physically matching monitors. Not sure if this will bother you or not

980 Ti instead of 980. Your monitor's refresh rate will be 144 and some people try to achieve a matching 144 FPS. 980Ti will get you closest to that, but I would research it a bit. I don't really buy into the need to match refresh rate to FPS... Also, consoles are locked to 60 or less I believe, so

Upgrade CPU cooler to a Thermalright True Spirit Power, Noctua NH-D15, Cryorig R1 Ultimate, be quiet Dark Rock Pro 3... Those will all be substantially more quiet than the 212 EVO and cool a bit better as well.

$1738 total, pick your own mouse and keyboard

If you'll be installing more than 3-4 games, you could bump the SSD up to 500GB for another ~$40, otherwise, I use a 250 and don't run into too many issues. I just keep game files copied to a external HDD and if I ever run out of room and need to swap in a different game, I just copy the game files to the Steam folder or what have you and only have to download any updates.

If you're looking to get into overclocking, you could spend another $50ish to upgrade to a nicer motherboard. The one I selected is the bare minimum mATX Z170 option. Will get you a basic OC, however. Gigabyte GA-Z170MX-Gaming 5, Asus Z170M-Plus, Asrock Z170M Extreme 4 would also be a step up feature-wise and the Asus Gene VIII would be the top dog in the mATX form factor (but $100 more).

Other upgrade options:

Physically matching monitors. Not sure if this will bother you or not

980 Ti instead of 980. Your monitor's refresh rate will be 144 and some people try to achieve a matching 144 FPS. 980Ti will get you closest to that, but I would research it a bit. I don't really buy into the need to match refresh rate to FPS... Also, consoles are locked to 60 or less I believe, so

Upgrade CPU cooler to a Thermalright True Spirit Power, Noctua NH-D15, Cryorig R1 Ultimate, be quiet Dark Rock Pro 3... Those will all be substantially more quiet than the 212 EVO and cool a bit better as well.

Last edited:

Glad you like it

If I used two monitors, I would probably try to match them as well.

I used high end air for a long time, then switched to closed loop water coolers for a couple years. I used an H90, H110, and H80i GT. Recently, I switched back to an air cooler (True Spirit Power) and I do like its simplicity and (significantly lower) noise level. The only thing the closed loop coolers have going for them, in my opinion, is looks. If you have a windowed case, they do look better in a build. With a few exceptions (Dark Rock Pro 3 and Cryorig R1, for example), air coolers a little unsightly.

That's my 0.02 on coolers. You get the same performance out of both (high end air and CLLC), you're just weighing decreased noise with air vs. better looks with water.

Have fun with the new build and stop back if you have questions along the way.

If I used two monitors, I would probably try to match them as well.

I used high end air for a long time, then switched to closed loop water coolers for a couple years. I used an H90, H110, and H80i GT. Recently, I switched back to an air cooler (True Spirit Power) and I do like its simplicity and (significantly lower) noise level. The only thing the closed loop coolers have going for them, in my opinion, is looks. If you have a windowed case, they do look better in a build. With a few exceptions (Dark Rock Pro 3 and Cryorig R1, for example), air coolers a little unsightly.

That's my 0.02 on coolers. You get the same performance out of both (high end air and CLLC), you're just weighing decreased noise with air vs. better looks with water.

Have fun with the new build and stop back if you have questions along the way.

You guys sure like spending other people's money

Here I am with a rig from 2008 plus an AMD 270 (non X) 2GB and gaming just fine on my 1080p 52" TV. Haven't been around here for a long time, but when did it start that every rig build includes a $600+ video card by default? The games I play aren't bleeding edge, but they are modern and I play with max settings (except AA) and have no issues. Using 1080p and only 1 gaming monitor reduces your graphics processing requirements a great deal. A quality video card in the $150-250 range should be more than adequate. My 270 was $175 2 years ago.

Here I am with a rig from 2008 plus an AMD 270 (non X) 2GB and gaming just fine on my 1080p 52" TV. Haven't been around here for a long time, but when did it start that every rig build includes a $600+ video card by default? The games I play aren't bleeding edge, but they are modern and I play with max settings (except AA) and have no issues. Using 1080p and only 1 gaming monitor reduces your graphics processing requirements a great deal. A quality video card in the $150-250 range should be more than adequate. My 270 was $175 2 years ago.

You guys sure like spending other people's money

Here I am with a rig from 2008 plus an AMD 270 (non X) 2GB and gaming just fine on my 1080p 52" TV. Haven't been around here for a long time, but when did it start that every rig build includes a $600+ video card by default? The games I play aren't bleeding edge, but they are modern and I play with max settings (except AA) and have no issues. Using 1080p and only 1 gaming monitor reduces your graphics processing requirements a great deal. A quality video card in the $150-250 range should be more than adequate. My 270 was $175 2 years ago.

OP picked the budget and it includes all peripherals as well. For their stated needs, you can't really get any cheaper than the builds suggested, buying retail.

If you aren't playing modern, AAA games, a $150-250 card (GTX 960/ R9 380X) would probably be okay. In modern games, BF3 or later, however, you'll be getting 50 or less FPS on Ultra @ 1080P with those cards (probably 30-40 with your 270).

To max today's games @ 1080 and maintain a smooth frame rate, you need an R9 390 or GTX 970, at a minimum. With OP's budget, they can afford to bump that up a level (390X or 980) and be ready for 2016's slate of games as well.

It's just a matter of what visual quality you're willing to accept and what FPS feels smooth to you. I like Ultra and 60+ FPS (not to mention 1440P). That requires a more expensive GPU.

EDIT: Also, get this man a badge

Last edited:

- Joined

- Jan 12, 2012

When a person comes for help to build a rig at a price point we build it to that point. If you look at the first build I put together I actually did try and save a few dollars. Who knows what the financial status of the OP is, maybe 2k is pocket change for him? If the op came and said build me a rig for 1k I'm sure we could have done that as well.You guys sure like spending other people's money

Here I am with a rig from 2008 plus an AMD 270 (non X) 2GB and gaming just fine on my 1080p 52" TV. Haven't been around here for a long time, but when did it start that every rig build includes a $600+ video card by default? The games I play aren't bleeding edge, but they are modern and I play with max settings (except AA) and have no issues. Using 1080p and only 1 gaming monitor reduces your graphics processing requirements a great deal. A quality video card in the $150-250 range should be more than adequate. My 270 was $175 2 years ago.

You guys sure like spending other people's money

Here I am with a rig from 2008 plus an AMD 270 (non X) 2GB and gaming just fine on my 1080p 52" TV. Haven't been around here for a long time, but when did it start that every rig build includes a $600+ video card by default? The games I play aren't bleeding edge, but they are modern and I play with max settings (except AA) and have no issues. Using 1080p and only 1 gaming monitor reduces your graphics processing requirements a great deal. A quality video card in the $150-250 range should be more than adequate. My 270 was $175 2 years ago.

I know where your coming from I had a GTX 570 and now I have GTX 970 and play BF4 on ultra 60 FPS minim 1080p. I could of used a GTX 960 because my FPS went through the roof with the GTX 970 however I like a little future proofing.

One interesting thing I finely have a Graphics card after all these years where the memory buss and memory speed is fast enough so I can swing side to side slow to scan scenery smooth as butter in all my first person shooter games. I use to have something like a choppy look or a frame skip when swinging side to side slow in the first person shooters. I know it's the memory buss and memory speed on the video card because when I turn off V sync it comes back, I think because it overdrives the video memory and bus so it can't keep up with the data, I also I have screen tarring with my 60Hz monitor with V sync off.

If you really think about it the hardest work for a video card with a first person shooter is swinging side to side slow it has to load data so fast from video memory compared to going in a strait line the data movement is slow because you are looking at the same objects.

If you like the new 144hz monitors you can use a GTX 970 or 980 v sync off with FPS up to 144 FPS

I'm not sold on high FPS past 30-60 never was, after all theses years from 2004 of owning and using many different video cards and having game settings on the highest causing a choppy look or a frame skip when swinging side to side slow, now my gaming is a perfect balance because of the video memory speed and buss.

If you aren't playing modern, AAA games, a $150-250 card (GTX 960/ R9 380X) would probably be okay. In modern games, BF3 or later, however, you'll be getting 50 or less FPS on Ultra @ 1080P with those cards (probably 30-40 with your 270).

To max today's games @ 1080 and maintain a smooth frame rate, you need an R9 390 or GTX 970, at a minimum. With OP's budget, they can afford to bump that up a level (390X or 980) and be ready for 2016's slate of games as well.

http://www.bit-tech.net/hardware/graphics/2013/11/13/amd-radeon-r9-270-review/3

47 min 59 avg FPS

Looks good enough to me, and that's with 4x AA and an Ivy Bridge platform. I've actually been considering upgrading the rest of my system, but leaving the video card alone as it is doing fine.

--

Moving forward 2 years, with BF4 and the GTX960, more advanced game and slightly more expensive graphics card, you get similar FPS as above. 45 min 56 avg.

http://www.tomshardware.com/reviews/nvidia-geforce-gtx-960,4038-4.html

At most I'd recommend a GTX970 for a 1080p gamer right now. Anything more is just throwing money away.

http://www.bit-tech.net/hardware/graphics/2013/11/13/amd-radeon-r9-270-review/3Anything more is just throwing money away.

...unless you prefer not having dips into the 40s.

Can you actually see it, or is it a mental thing?

O boy where going here fps less than 60, I really think people can't see it I have done blind tests..

Varies person to person. I think, even more so than just differences in vision, it's differences in what a user is accustomed to. 10 years ago, I was comfortable with 30+ FPS. 5 years ago, I comfortable with 50+. Now, I'm comfortable with 60+. As my hardware has improved, I've gotten used to smoother frame rates and the difference when going backwards is more evident.

Though I can only speak for myself and what I'm comfortable with, the human eye is physiologically capable of detecting the difference in frame rates even higher than 60.

Varies person to person. I think, even more so than just differences in vision, it's differences in what a user is accustomed to. 10 years ago, I was comfortable with 30+ FPS. 5 years ago, I comfortable with 50+. Now, I'm comfortable with 60+. As my hardware has improved, I've gotten used to smoother frame rates and the difference when going backwards is more evident.

Though I can only speak for myself and what I'm comfortable with, the human eye is physiologically capable of detecting the difference in frame rates even higher than 60.

No the human eye and brain can't tell the difference from 25-30 fps or 60 fps and beyond. QUOTE: The human eye and its brain interface, the human visual system, can process 10 to 12 separate images per second, perceiving them individually.

Here is the link. https://en.wikipedia.org/wiki/Frame_rate

Movies are still at 24-25 FPS people still can't tell the difference, also all TV HD 1080i (i) means (interlaced), out of the 60Hz first the odd frames are drawn on your screen then the even frames by doing so it splits the frames into 30Hz for each, (can you see that happening, NO.)

The only reason why people think FPS is so important today compared to when I had my voodoo2 video accelerator Glide at 20- 30 FPS with my need for Speed SE that ran so smooth at 20-30 fps, is because if there is blurring or micro stutter, frame skip people get new graphics cards and the only thing we test is FPS with that understanding new graphics card always help anyway. Graphics are so complicated now that FPS is not telling the story anymore.

If you want to take a blind test to see if you can tell the differences from 60 fps or 30 fps I will do a run-through with BF4 locking my GTX 970 1080p Graphics card at a set frame rates and I won't have the OSD.

Last edited:

No the human eye and brain can't tell the difference from 25-30 fps or 60 fps and beyond. QUOTE: The human eye and its brain interface, the human visual system, can process 10 to 12 separate images per second, perceiving them individually.

Here is the link. https://en.wikipedia.org/wiki/Frame_rate

Movies are still at 24-25 FPS people still can't tell the difference, also all TV HD 1080i (i) means (interlaced), out of the 60Hz first the odd frames are drawn on your screen then the even frames by doing so it splits the frames into 30Hz for each, (can you see that happening, NO.)

The only reason why people think FPS is so important today compared to when I had my voodoo2 video accelerator Glide at 20- 30 FPS with my need for Speed SE that ran so smooth at 20-30 fps, is because if there is blurring or micro stutter, frame skip people get new graphics cards and the only thing we test is FPS with that understanding new graphics card always help anyway. Graphics are so complicated now that FPS is not telling the story anymore.

If you want to take a blind test to see if you can tell the differences from 60 fps or 30 fps I will do a run-through with BF4 locking my GTX 970 1080p Graphics card at a set frame rates and I won't have the OSD.

Sorry, but no. Movies and TV use blurring to make 24 FPS seem smooth. Modern games with the motion blur feature attempt to do the same for systems with lower FPS.

If frames from a movie were not blurred, it would look like garbage (just like a game running at 24 FPS without motion blur would)...

EDIT:

Also, this is like 2 paragraphs down from what you quoted from that wiki:

However recent studies have shown that the Retina actually juggles when processing information. In 2014, it was shown during research that the human eye could see at various frame rates varying from person to person. [10] In 2011, written on a forum was said that the retina takes about 5 to 12 milliseconds for an electrical impulse to fire and reset, 100 to 1000 rods depending on where in the retina you are, can fire every 7 milliseconds on average or around 140 fps.[11] Another website said that the human eye on average could see up to 150 fps. It is still unsettled on what the average "frame rate" of the human eye is, but so far based on recent studies within the last decade, show the human eye seeing anywhere between 75 to 150 fps with an average of about 140 fps.

Which reads to me as: stop looking at wikipedia for information haha "written on a forum was said" LOL

Last edited: