I'm not familiar with the way that the E@H CUDA app is coded, but your results seem consistent with what happens with the SETI CUDA app on Fermi (GTX4xx/5xx) cards. Fermi cards are designed for mass multi-threaded apps, and there's only so far you can parallelise apps before severe diminishing returns take place, and eventually it'll asymptote out.

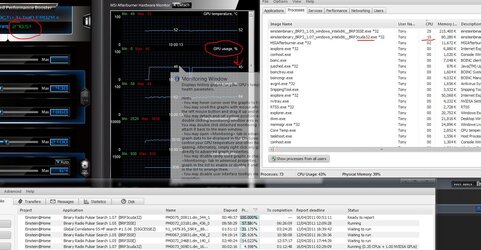

Running a single S@H instance on a GTX560 will only get ~30-35% GPU usage. To combat this, most users run several instances at once (usually 3 or 4 gives the best performance, RAC-wise). I just took a look at your GTX560 on the E@H site, and I see that the E@H app only uses between 90-250MB of GPU RAM, so you could look into running several instances and see how it plays.

To do this, you need to modify your app_info.xml file, located in (assuming windows 7 and default BOINC install) %AllUsersProfile%\BOINC\projects\<einstein@home folder>. What you're looking for is

Code:

<coproc>

<type>CUDA</type>

<count>1.000000</count>

</coproc>

Changing the <count> to 0.5 will force 2 instances of E@H to run on the GPU, 0.333333 will force 3, etc. I'd try out 0.5 first to see if it improves your situation. Note that you will then see 2 instances of the CUDA app running in windows task mangler, each using some more CPU time, and your GPU RAM usage will go up as well.

On the CPU time note, you can set how much CPU time is dedicated to feeding the GPUs in the same app_info.xml file by changing

Code:

<avg_ncpus>0.200000</avg_ncpus>

<max_ncpus>0.200000</max_ncpus>

these values. From the looks of it, E@H uses a default of 20% CPU time. This differs from project to project (SETI uses 0.040000, or 4% by default, for instance). You could try messing around with these numbers, but I'd only attempt to do that after you've checked that running multiple instances of the CUDA app isn't working (which it should)

thideras said:

If you pause the cpu work units, does the GPU one go up? The video card requires some processor to feed it information. If you max out all the cores, it is left with very little processing power.

Most projects I've seen run their CUDA apps in a slightly higher process priority upon startup, which -should- minimise the effect of running all CPU cores maxed out. I can't see anything in the stderr output that states this, though.