-

Welcome to Overclockers Forums! Join us to reply in threads, receive reduced ads, and to customize your site experience!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

AMD going 20nm this year?

- Thread starter mjw21a

- Start date

- Joined

- Mar 25, 2011

- Location

- Pacific NW USA

45 to 32mn = die shrink. The term "die shrink" is usually more associated with "process shrink" so yes it's a bit of a misnomer, but whether or not the die size actually shrinks, decreases in process lithography geometry are called die shrinks.

In this sense we're talking about die size reduction which would equate to more dies per wafer. It was claimed that going from 32nm to 22nm doubled the dies per wafer.

You don't get a die shrink when they keep adding more trassisstor, that was the hole point of lithography node shrinks 2 core 4 core 6 core 8 core IMC controller, IGP and some day the south bridge controller.

Intel® Core™2 Quad Processor Q9450 quad core.

(12M Cache, 2.66 GHz, 1333 MHz FSB)

Processing Die Size 214 mm2

http://ark.intel.com/products/33923...cessor-Q9450-(12M-Cache-2_66-GHz-1333-MHz-FSB)

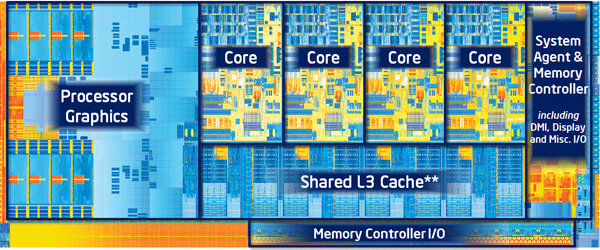

Intel Sandy Bridge 4C 32nm 216mm2 quad core.

http://www.anandtech.com/show/4083

Sandy Bridge-E LGA 2011 435 mm²

45nm to 32nm no die shrink, die expand.

The 217 mm² of Pentium 4 are more than double the size of Pentium III and almost double the size of Athlon. This seems rather surprising when you realize that Pentium 4 has got 42 million transistors and Athlon 37 million. Each of the four is manufactured in 180 nm process. Bottom line: Pentium 4 is big

Intel® Core™2 Quad Processor Q9450 quad core.

(12M Cache, 2.66 GHz, 1333 MHz FSB)

Processing Die Size 214 mm2

http://ark.intel.com/products/33923...cessor-Q9450-(12M-Cache-2_66-GHz-1333-MHz-FSB)

Intel Sandy Bridge 4C 32nm 216mm2 quad core.

http://www.anandtech.com/show/4083

Sandy Bridge-E LGA 2011 435 mm²

45nm to 32nm no die shrink, die expand.

The 217 mm² of Pentium 4 are more than double the size of Pentium III and almost double the size of Athlon. This seems rather surprising when you realize that Pentium 4 has got 42 million transistors and Athlon 37 million. Each of the four is manufactured in 180 nm process. Bottom line: Pentium 4 is big

Last edited:

- Joined

- Dec 7, 2011

In this sense we're talking about die size reduction which would equate to more dies per wafer. It was claimed that going from 32nm to 22nm doubled the dies per wafer.

Ahh, didn't know the conversation had gone that direction. However, fact remains that 32nm to 20nm is still considered a "die shrink" regardless of what the final die area is after the final architecture is determined. Syntactically wrong, but colloquially correct. Just how it is. If all die shrinks were truly "die area shrinks", then CPUs would be the size of a pinhead by now.

Even if 32 to 22 did double the dies per wafer, it wouldn't double the final die production per wafer. At 22nm the fraction of failed (unsellable) chips is much higher than at 32nm. i.e. the yield is lower at 22nm than at 32nm.

That said, using these small-process CPU architectures in mobile devices at significantly reduced voltage reduces the heat load per mm^2 that needs to be removed from these vanishingly small traces.

- Joined

- Mar 25, 2011

- Location

- Pacific NW USA

Ahh, didn't know the conversation had gone that direction. However, fact remains that 32nm to 20nm is still considered a "die shrink" regardless of what the final die area is after the final architecture is determined. Syntactically wrong, but colloquially correct. Just how it is. If all die shrinks were truly "die area shrinks", then CPUs would be the size of a pinhead by now.

Even if 32 to 22 did double the dies per wafer, it wouldn't double the final die production per wafer. At 22nm the fraction of failed (unsellable) chips is much higher than at 32nm. i.e. the yield is lower at 22nm than at 32nm.

That said, using these small-process CPU architectures in mobile devices at significantly reduced voltage reduces the heat load per mm^2 that needs to be removed from these vanishingly small traces.

I consider that a "process node size reduction" not a "die shrink" (they are used too interchangeably in my opinion since they are two different things) as the "die" size can vary at any level depending on the architecture whereas a "node" size is relevent to the actual size of the lithography process and "density" of the circuit.

The point I was trying to point out is that as the advanced nodes have surpassed 28nm the rules have changed. Before, moving to the next process node yielded many benefits (allowing for many more transistors while either keeping the die size the same or smaller than the previous "gen", reduction in power consumption etc.) without many "negatives". That's changed significantly. The processes have become exponentially more complex (and thus more expensive), new problems have arisen that they haven't had to deal with before ("walls" between traces becoming so "thin" that current leakage is becoming an ever increasing problem etc.) and the profit margins of the chips has and will grow smaller without significant breakthroughs (silicon replacement etc.). The whole point being that I think it's a smart move on AMD's part to hold back on moving in the direction of 22nm and below when they don't necessarily need to. Maybe we'll see 20nm in later steppings of trinity or the next gen "mobile" parts which won't be overclocked and will allow for low power consumption but I don't see that happening with the Piledriver desktop sku's which I think will remain at 32nm until many of the problems with sub 28nm are solved.

- Joined

- Sep 29, 2004

- Thread Starter

- #167

Well, technically, provided the architecture doesn't change and nothing is added, a process node reduction is a die shrink. The one exception is when additional items are added and the die size remains the same or similar...... But yeah, I get what you mean

- Joined

- Oct 11, 2005

- Location

- Tau'ri

Source 2009 article!!! Not new information!!

4 times as many chips per wafer as 45nm. (referring to cache I think???? SRAM ??? which is larger than cores anymore)

As for comparing q6600 to sandybridge or ivy bridge is not accurate since no one makes Q6600 anymore. And it has neither the cache, the IGP nor the IMC that future chips did. I thought the lynnfield die size was a bit more accurate, it had IMC and IGP and more cache than Q6600.

Anyway, it was not MY claim that the number of chips doubled, it was news sources that claimed it. I just repeated it

Also this part

They do not see it is decreasing vs number of defects on previous sizes, but do say it is decreasing. What that means I do not know.

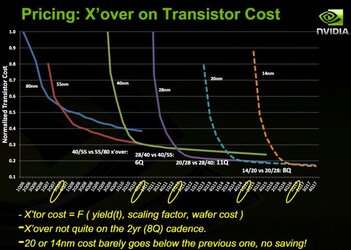

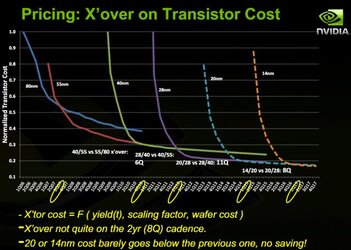

Nvidia discussing die shrink

Now nvidia seems to be saying what wingman is. They are complaining (despite what the slide shows) that dieshrinks talk longer to hit a price/transistor ratio that is equitable. OF course their die shrink from 90nn to 55nm also saw a transitor count move from 681M to 1.42B !! in one generation.

Intel showed off the first 32nm SRAM test chips at the IDF in 2007, and this year they showed us their first 22nm SRAM test chips. With the 22nm process, Intel will be able to produce four times the number of chips per wafer of the 45nm process, thus making CPUs even cheaper once the process reaches mature yields.

4 times as many chips per wafer as 45nm. (referring to cache I think???? SRAM ??? which is larger than cores anymore)

As for comparing q6600 to sandybridge or ivy bridge is not accurate since no one makes Q6600 anymore. And it has neither the cache, the IGP nor the IMC that future chips did. I thought the lynnfield die size was a bit more accurate, it had IMC and IGP and more cache than Q6600.

Anyway, it was not MY claim that the number of chips doubled, it was news sources that claimed it. I just repeated it

Also this part

Yields are very good and defect density is steadily decreasing.

They do not see it is decreasing vs number of defects on previous sizes, but do say it is decreasing. What that means I do not know.

Nvidia discussing die shrink

Now nvidia seems to be saying what wingman is. They are complaining (despite what the slide shows) that dieshrinks talk longer to hit a price/transistor ratio that is equitable. OF course their die shrink from 90nn to 55nm also saw a transitor count move from 681M to 1.42B !! in one generation.

Last edited:

- Joined

- Mar 25, 2011

- Location

- Pacific NW USA

Source 2009 article!!! Not new information!!

4 times as many chips per wafer as 45nm. (referring to cache I think???? SRAM ??? which is larger than cores anymore)

As for comparing q6600 to sandybridge or ivy bridge is not accurate since no one makes Q6600 anymore. And it has neither the cache, the IGP nor the IMC that future chips did. I thought the lynnfield die size was a bit more accurate, it had IMC and IGP and more cache than Q6600.

Anyway, it was not MY claim that the number of chips doubled, it was news sources that claimed it. I just repeated it

Also this part

They do not see it is decreasing vs number of defects on previous sizes, but do say it is decreasing. What that means I do not know.

Nvidia discussing die shrink

Now nvidia seems to be saying what wingman is. They are complaining (despite what the slide shows) that dieshrinks talk longer to hit a price/transistor ratio that is equitable. OF course their die shrink from 90nn to 55nm also saw a transitor count move from 681M to 1.42B !! in one generation.

Essentially what Nvidia is saying is this. As an architecture is released and the process matures the "per transistor" cost decreases over time and that equates to higher profits realized as well as price reductions for the consumer. As the next "gen" GPU is released on a new process level (i.e. going frorm 55nm to 45nm the "per transistor" cost rises of course but it used to drop below the "per transistor" cost of the previous level. You can see that in the Chart going from 80nm to 55nm and then from 45nm to 28nm. Moving to 20nm means you're initial "per transistor" cost goes up yet you don't see a drop below the previous level throughout the life of the node level. So the incentive is not there to reduce node size. Why move to 20nm and then 14nm when you're initial costs will rise and won't be realized in the form of increased profits down the road. It all makes sense when you look at the next chart which shows increasing wafer costs from node to node and it's getting worse and worse. It's easy to see why when node to node the costs associated with fabrication are significantly increasing due to requiring advanced lithography processes that were previously not necessary at the larger node sizes (i.e. double/triple exposer etc).

- Joined

- Oct 11, 2005

- Location

- Tau'ri

Ahh see what I saw on the chart was that the older "more cost effective" transitors took a longer time to drop in price over the newer ones I didn't see the difference in the bottom line. I thought given the short life span of each arch a quick drop in price was more beneficial than the steady decline into obsolesence.

Except the 28nm and further shrinks not only drop faster in price but go lower. So I still do not get it.

For others that did not click the link...

It took 3Q for 40nm to become lower transistor price to 80nm or 55nm(spanning years), and the same 3 Q for 28nm to meet 40nm in price per transistor. (projected same 3Q for next couple of die shrinks) It drops 80% of cost from start to less than 1 month in. which means projected vs actual. And continues to decline as inventory expands and supply crushes the prices down.

As an aside Nikon bravadoed 200 wafers an hour at 20nm using their equipment. Surely more per hour means less cost especially when talking about supply.

Now I do understand that this argument is saying that "If same transistor cost" a 22nm design with more transistors costs more than 32nm chip with less than half the transistors.

And there is more to it then that. As mentioned earlier the 45nm Q6600 only had 582 Million transistors. So a sandy B. with 995 million. Adds in a IGP, IMC and more cache memory.

So nVidias charts show that despite the reduction in transistor cost, the increase in transistors leads to a higher price.

See I am capable of seeing both sides.

The only issue is, nVidia is the only one claiming that. Everyone else claims a reduction in cost. Now, Intel's relatively recent move to integrated GPU and IMC and the increased cache is offset by not building that into their chipsets. No advanced IOH extra power etc.

Now all you need is a digital to analog converter for the FDI and your off and running. ASUS has made money on their separate chips that allow remote control of everything, despite that being built into Intel chips on workstation chipsets. A value add Intel did not need to add, but why ASUS software needs Intel MEI to work.

Anyway back to the nVidia point. nVidia is complaining they can no longer justify $500 flagship costs at sub 28nm. Basically that is what they are saying. Which means software developers are no longer going to get their stipends to design TWIMTBP games, which is good news for AMD!

IF CPU designers were saying the same thing, it would be different. Did AMD say 20nm is going to drive up costs? Did Intel say that? Did ARM say that? No.

Apparently only nVidia says it. Which might mean something, but not to me, or most people.

Back to double cores per wafer each die shrink. I didn't say it, I repeated it, Intel said it. Would Big Blue lie? Despite the supposed doubling or whatever of transistor counts.. die shrinks still happen. L# cache is the size culprit. Its why SB-E chips are so big. Remember when 16 MB of RAM used to be a 3.5" PCB you added to a mobo. Ever think it could run at 6000 MHz?

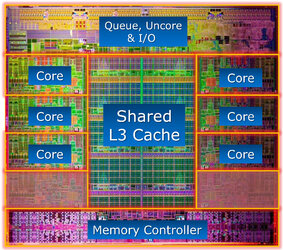

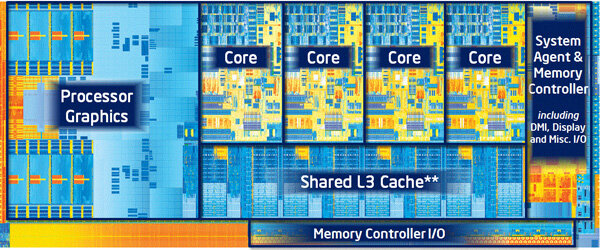

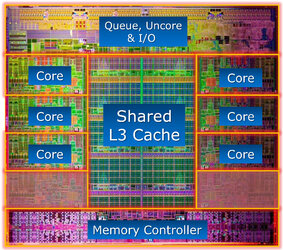

Oh here is another good pic of SB-E

Cost per TRIGATE transitor might go up but trigate might be 5% of every CPU...every DIE is an 8 core....

Except the 28nm and further shrinks not only drop faster in price but go lower. So I still do not get it.

For others that did not click the link...

It took 3Q for 40nm to become lower transistor price to 80nm or 55nm(spanning years), and the same 3 Q for 28nm to meet 40nm in price per transistor. (projected same 3Q for next couple of die shrinks) It drops 80% of cost from start to less than 1 month in. which means projected vs actual. And continues to decline as inventory expands and supply crushes the prices down.

As an aside Nikon bravadoed 200 wafers an hour at 20nm using their equipment. Surely more per hour means less cost especially when talking about supply.

Now I do understand that this argument is saying that "If same transistor cost" a 22nm design with more transistors costs more than 32nm chip with less than half the transistors.

And there is more to it then that. As mentioned earlier the 45nm Q6600 only had 582 Million transistors. So a sandy B. with 995 million. Adds in a IGP, IMC and more cache memory.

So nVidias charts show that despite the reduction in transistor cost, the increase in transistors leads to a higher price.

See I am capable of seeing both sides.

The only issue is, nVidia is the only one claiming that. Everyone else claims a reduction in cost. Now, Intel's relatively recent move to integrated GPU and IMC and the increased cache is offset by not building that into their chipsets. No advanced IOH extra power etc.

Now all you need is a digital to analog converter for the FDI and your off and running. ASUS has made money on their separate chips that allow remote control of everything, despite that being built into Intel chips on workstation chipsets. A value add Intel did not need to add, but why ASUS software needs Intel MEI to work.

Anyway back to the nVidia point. nVidia is complaining they can no longer justify $500 flagship costs at sub 28nm. Basically that is what they are saying. Which means software developers are no longer going to get their stipends to design TWIMTBP games, which is good news for AMD!

IF CPU designers were saying the same thing, it would be different. Did AMD say 20nm is going to drive up costs? Did Intel say that? Did ARM say that? No.

Apparently only nVidia says it. Which might mean something, but not to me, or most people.

Back to double cores per wafer each die shrink. I didn't say it, I repeated it, Intel said it. Would Big Blue lie? Despite the supposed doubling or whatever of transistor counts.. die shrinks still happen. L# cache is the size culprit. Its why SB-E chips are so big. Remember when 16 MB of RAM used to be a 3.5" PCB you added to a mobo. Ever think it could run at 6000 MHz?

Oh here is another good pic of SB-E

Cost per TRIGATE transitor might go up but trigate might be 5% of every CPU...every DIE is an 8 core....

Last edited:

- Joined

- Oct 11, 2005

- Location

- Tau'ri

More food for thought:

http://news.softpedia.com/news/20-n...Year-for-AMD-Qualcomm-and-Nvidia-270723.shtml

(oops except nvidia. Only AMD qualcomm and TI. )

http://news.softpedia.com/news/20-n...Year-for-AMD-Qualcomm-and-Nvidia-270723.shtml

(oops except nvidia. Only AMD qualcomm and TI. )

Last edited:

From your link Quote:The move will most likely start during the second half of next year and it will probably be quite expensive, as it is estimated that the costs of the shrink will only be amortized after building and selling at least 100 million 20 nm chips.

Do you realize the street value of this mountain? It is pure snow. I loved that movie Better Off Dead.

Do you realize the street value of this mountain? It is pure snow. I loved that movie Better Off Dead.

Attachments

Last edited:

- Joined

- Oct 11, 2005

- Location

- Tau'ri

From your link Quote:The move will most likely start during the second half of next year and it will probably be quite expensive, as it is estimated that the costs of the shrink will only be amortized after building and selling at least 100 million 20 nm chips.

Do you realize the street value of this mountain? It is pure snow. I loved that movie Better Off Dead.

You won with that argument alone.

The street value of this mountain supersedes anything said before this post by anyone.

Not sure about the 100 million chip thing. If it was so expensive why would they do it? How many chips on current arch do they have to sell before they start to make a profit?

Last edited:

- Joined

- Mar 25, 2011

- Location

- Pacific NW USA

Ahh see what I saw on the chart was that the older "more cost effective" transitors took a longer time to drop in price over the newer ones I didn't see the difference in the bottom line. I thought given the short life span of each arch a quick drop in price was more beneficial than the steady decline into obsolesence.

Except the 28nm and further shrinks not only drop faster in price but go lower. So I still do not get it.

For others that did not click the link...

It took 3Q for 40nm to become lower transistor price to 80nm or 55nm(spanning years), and the same 3 Q for 28nm to meet 40nm in price per transistor. (projected same 3Q for next couple of die shrinks) It drops 80% of cost from start to less than 1 month in. which means projected vs actual. And continues to decline as inventory expands and supply crushes the prices down.

As an aside Nikon bravadoed 200 wafers an hour at 20nm using their equipment. Surely more per hour means less cost especially when talking about supply.

Now I do understand that this argument is saying that "If same transistor cost" a 22nm design with more transistors costs more than 32nm chip with less than half the transistors.

And there is more to it then that. As mentioned earlier the 45nm Q6600 only had 582 Million transistors. So a sandy B. with 995 million. Adds in a IGP, IMC and more cache memory.

So nVidias charts show that despite the reduction in transistor cost, the increase in transistors leads to a higher price.

See I am capable of seeing both sides.

The only issue is, nVidia is the only one claiming that. Everyone else claims a reduction in cost. Now, Intel's relatively recent move to integrated GPU and IMC and the increased cache is offset by not building that into their chipsets. No advanced IOH extra power etc.

Now all you need is a digital to analog converter for the FDI and your off and running. ASUS has made money on their separate chips that allow remote control of everything, despite that being built into Intel chips on workstation chipsets. A value add Intel did not need to add, but why ASUS software needs Intel MEI to work.

Anyway back to the nVidia point. nVidia is complaining they can no longer justify $500 flagship costs at sub 28nm. Basically that is what they are saying. Which means software developers are no longer going to get their stipends to design TWIMTBP games, which is good news for AMD!

IF CPU designers were saying the same thing, it would be different. Did AMD say 20nm is going to drive up costs? Did Intel say that? Did ARM say that? No.

Apparently only nVidia says it. Which might mean something, but not to me, or most people.

Back to double cores per wafer each die shrink. I didn't say it, I repeated it, Intel said it. Would Big Blue lie? Despite the supposed doubling or whatever of transistor counts.. die shrinks still happen. L# cache is the size culprit. Its why SB-E chips are so big. Remember when 16 MB of RAM used to be a 3.5" PCB you added to a mobo. Ever think it could run at 6000 MHz?

Oh here is another good pic of SB-E

Cost per TRIGATE transitor might go up but trigate might be 5% of every CPU...every DIE is an 8 core....

What Nvidia is saying is this. Look at 50nm. It starts at 0.8 normalized cost per transistor in Q2 2007 and reaches 0.3 at Q4 2010. Now 2 quarters prior to that they started 40nm which shot the price up per transistor to 1.0 (or above) but it's advantages were A)higher transistor density and therefore a more powerful GPU, B)It's normalized cost dropped pretty quickly, and most importantly C)40nm didn't require massive and extremely expensive changes in lithography equipment and production line retooling so the additional cost (the higher normalized cost per transistor) to produce (which you've mistakenly confused with consumer price which is affected by inventories etc.) is fairly quickly recouped. Now look at 28nm to 20nm. 28nm starts at Q3 2011 and we see it go to Q3 2013 where they show the projected 20nm process start. So at Q3 2013 with 28n they project to be at a production cost at just north of 0.2 per transistor. Best case scenario for 20nm production cost is for it to match the same level as 28nm. So what incentive do they have to switch to the next node size of 20nm when it's going to initially jump up significantly compared to where they currently are and has no savings potential in the future and is starting to take longer to normalize cost as they go smaller instead of taking less time as they were seeing previously. What you're failing to see is that even with intel as the node size progresses to 20nm and smaller, each fabrication company or division of a company is having to invest large amounts of money in new and much more expensive lithography processes that were previously unneeded. This is because the size of the node has reached a point of being so small that the processes they've used in the past don't have a resolution high enough for wafer yields to remain at the same level they were. Which is why you see on the next slide where wafer costs are rising significantly and exponentially. In other words they've reached a tipping point in the production of IC's wether they are CPU's or GPU's that's getting more and more expensive to produce and requires longer and longer to recoup the costs.

http://news.softpedia.com/news/20-n...Year-for-AMD-Qualcomm-and-Nvidia-270723.shtml

The move will most likely start during the second half of next year and it will probably be quite expensive, as it is estimated that the costs of the shrink will only be amortized after building and selling at least 100 million 20 nm chips.

That's precisely why they are looking to new technology to solve this problem by looking in areas such as a replacement for silicon.

Here's another interesting article to read.

http://www.electroiq.com/articles/s...ool-explores-tradeoffs-at-20nm-and-below.html

- Joined

- Oct 11, 2005

- Location

- Tau'ri

What Nvidia is saying is this. Look at 50nm. It starts at 0.8 normalized cost per transistor in Q2 2007 and reaches 0.3 at Q4 2010.

Which boils down to a 60% cost reduction over 3 years.

20nm drops 75% in cost (from .8 to .2) in half the time. (6 quarters) based on the projected 2 years cycle that makes 20nm a more cost effective solution than previous nodes.

Now 2 quarters prior to that they started 40nm which shot the price up per transistor to 1.0 (or above) but it's advantages were A)higher transistor density and therefore a more powerful GPU, B)It's normalized cost dropped pretty quickly, and most importantly C)40nm didn't require massive and extremely expensive changes in lithography equipment and production line retooling so the additional cost (the higher normalized cost per transistor) to produce (which you've mistakenly confused with consumer price which is affected by inventories etc.) is fairly quickly recouped.

40nm was a big issue at production. TSMC had tons of problems and was months late bringing it into production because of those issues. (in actuality they were EXTREMELY late on 45nm, just called it 40nm. In which they were even late with that considering it should have been a halfnode advance over 45nm.

Now look at 28nm to 20nm. 28nm starts at Q3 2011 and we see it go to Q3 2013 where they show the projected 20nm process start. So at Q3 2013 with 28n they project to be at a production cost at just north of 0.2 per transistor. Best case scenario for 20nm production cost is for it to match the same level as 28nm. So what incentive do they have to switch to the next node size of 20nm when it's going to initially jump up significantly compared to where they currently are and has no savings potential in the future and is starting to take longer to normalize cost as they go smaller instead of taking less time as they were seeing previously.

If its an issue why are they doing it? The article states GPUs use so many that they can absorb the cost of dieshrinks because they an pack more transitors in and make insanely powerful GPUs. If 20nm is going to be too expensive, nVidia can take a back seat for a few months and let someone else eat the production cost until transistor cost drops to less than 28nm right? But they can't can they...

What you're failing to see is that even with intel as the node size progresses to 20nm and smaller, each fabrication company or division of a company is having to invest large amounts of money in new and much more expensive lithography processes that were previously unneeded. This is because the size of the node has reached a point of being so small that the processes they've used in the past don't have a resolution high enough for wafer yields to remain at the same level they were. Which is why you see on the next slide where wafer costs are rising significantly and exponentially. In other words they've reached a tipping point in the production of IC's wether they are CPU's or GPU's that's getting more and more expensive to produce and requires longer and longer to recoup the costs.

http://news.softpedia.com/news/20-n...Year-for-AMD-Qualcomm-and-Nvidia-270723.shtml

That's precisely why they are looking to new technology to solve this problem by looking in areas such as a replacement for silicon.

Here's another interesting article to read.

http://www.electroiq.com/articles/s...ool-explores-tradeoffs-at-20nm-and-below.html

I do see that a new process is required and understand the investment necessary. I already posted that link for softpedia, which I just learned is wrong since it claims they are moving to bulk CMOS production and abandoning SOI, which in actuality GloFo is moving to Fully Depleted SOI for now with adoption of 3D finfets in the near future, the design stage is the same but it still has SOI advantages. Bulk requires more voltage and capacitance to operate equally with SOI designs which is not effective on high speed processors like a CPU.

FD-SOI link and FD-3D

Original Industry Press release

source:http://www.advancedsubstratenews.com/

The Pentium Extreme Edition launched at $1200 in May 2005 on 90nm (later shrunk to 65nm) 3.2 GHz unlocked dual core with hyperthreading.

The Intel core i7 980X launched at $999 in March 2010 on 32nm, 3.33GHz unlocked 6 core with 12MB cache and hyperthreading. Despite inflation totaling a 12.7% increase in the same 5 year span it cost ~20% less to buy a chip with three times the cores and an insane IPC increase to boot.

I decided to do a search on "32nm more expensive" and found plenty of articles saying exactly that. Despite the increase in cost, and inflatino and all the other things, it still led to more profits for Intel as well as a lower cost for the end user.

I understand what you are saying, but for some reason, companies are making more profit on these more expensive technologies despite offering faster products at a lower price. How? Especially when for SSDs the dieshrinks have had HUGE impacts on price.... 50nm to 34nm MLC NAND

Source:intelThe move to 34nm will help lower prices of the SSDs up to 60 percent for PC and laptop makers and consumers who buy them due to the reduced die size and advanced engineering design.

EDIT EDIT: OH I guess in my trimming I deleted my comment about marchitecture lying about actual design size for instance TSMC and there 45nm oops now its 40nm since we were so late, claim and companies they were producing chips for still calling them 45nm... and the fact that 65nm and 45nm chips both used 40nm gates... I theorized that Intels 22nm isnt 22nm at all but the trigate design allows for 2/3 the surface area to be used thus... 32nm still. (thats incorrect, the tri gate design the third gate is not like adding on 50% more transistors but it helps to reduce leakage, reduce doping etc...). Oh but the gates on the 22nm process are 25nm :S

Well just read this on wiki...

Intel will need to reach a new manufacturing process every two years; this would imply going to 14 nm node as early as 2013. However, for Intel, the design rule at this node designation is actually about 30 nm.[4]

Design rule is important to note because it is the only standard for marchitecture and no company appears to follow it. wiki link

This has nothing to do with the cost discussion we are having, just interesting I thought

More 20nm good news

Source:http://www.eetimes.com/electronics-news/4374611/GloFo--TSMC-report-process-tech-progressMeanwhile at least three companies are about to participate in Globalfoundries’ first multi-process wafer to qualify its 20 nm process also in New York. The company expects to run several of the shuttles this year so it can start 20 nm production early in 2013 with tested third-party IP where needed.

EDIT: On a bad note, by the time we have 20nm CPUs for AMD, Intel will be taping out there next halfnode shrink. *sigh

Last edited:

- Joined

- Mar 25, 2011

- Location

- Pacific NW USA

What I'm trying to say is that when it comes to intel they're profit per chip is something like (and this is just guesstimate based on what I've read and also example not exact figures) a chip costs to produce for example say $60 and sells retail for $219. I know of one example, the Atoms were costing somewhere around $4 to produce and the cost to computer makers like HP was something like $20 (can't remember exactly). It's not that they are barely making any profit but increased costs are cutting more and more into the existing profit. Even at 32nm the costs didn't increase a whole lot. I'll dig up the articles I read again (just have to remember which silicon industry magazine it was). If I remember correctly the added costs at 32nm were having to do double exposure process on the lithography. Moving to 20nm they're using things like that (I think it was fujitsu) machine that has the lens submerged in liquid to improve the resolution at the smaller node size (as well as other expensive processes). It requires a special liquid to help keep the lense abrasions down to an acceptable level. And as they move to smaller and smaller nodes the problems become greater (sidewall leakage etc) that they didn't have to deal with at 32nm and higher. Eventually I'm sure the costs will come down as they develope new technology but that's going to take time. As far as AMD goes I think they're doing the smart thing. Let intel be the guinea pig in the developement and R&D department with the advanced nodes. Meanwhile they start to use a completely different architecture that has efficiency advantages as the software catches up to it in the future (multi-threading etc.) and start to move away from the old x86 & x87 architecture which is unnecessary now.

- Joined

- Oct 11, 2005

- Location

- Tau'ri

Well the future is fusion a completely new arch is probably necessary.

Cant find the slide right now.

Showed

CPU-----GPU on same package

CPU-GPU on same die

C(g)P(p)U(u) coexisting in same space. It will be neat to see how they pull that off

Cant find the slide right now.

Showed

CPU-----GPU on same package

CPU-GPU on same die

C(g)P(p)U(u) coexisting in same space. It will be neat to see how they pull that off