- Joined

- Jul 20, 2006

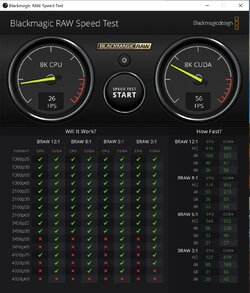

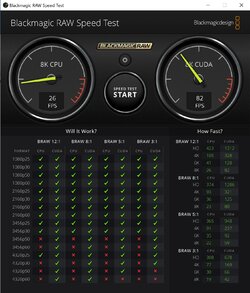

https://www.pugetsystems.com/labs/a...VIDIA-GeForce-RTX-3080-3090-Performance-1903/ I know that this shouldn't even be an issue... but somehow it is. I haven't really thought much about graphics cards in years. I usually get whatever the most reasonable used NVIDIA card I can find... and so far ten times out of ten that has been enough for whatever I've needed to do over the past ten years. (I don't think I've bought a brand new video card since the EARLY 2000s.) But... as everyone here knows... this is an unusual year. We've got the 3080 out at an unprecedented price/performance level and the 3070 soon to come.

After reading this article: https://www.pugetsystems.com/labs/a...RandAMDRadeonRX5700XTperforminDaVinciResolve?

...and another that contained the 1080TI... I became convinced that there simply wasn't enough of a performance difference, in the DaVinci Resolve video editor mind you... for me to care about anything beyond an RTX 2060 Super. (Literally 1fps difference between that and an RTX 2070 super.)

But then I saw their updated chart (up top) with the 3080... and that gave me pause.

Not because of the huge performance increase so much (though I'm sure that is a very nice thing...)

...but because of the PRICE!

I don't think I can swing an RTX 2060 Super... or even a used 1080TI... for anything under 350. That's just the reality. And if I pay that much for one now... the value for that card is just going to RAPIDLY decline as soon as the 3080 is more widely available.

This places me in a weird dilemma as I have projects to edit NOW...

So the most logical thing (which simultaneously strikes me as insane) would seem to be spending twice as much as I was looking to spend and getting the 3080. Or just scraping the bottom if ebay for a used 1080ti and holding onto that until the 3060s come out. (I mean... how low could a 1080ti POSSIBLY go for on eBay?)

International Superstar TeamRainless would normally just get the 3080... but with also the release... next month mind you... of both the PS5 and XBox Series X... this is shaping up to be an unusually expensive year... So I'm trying to save a little money wherever I can.

What say you?

After reading this article: https://www.pugetsystems.com/labs/a...RandAMDRadeonRX5700XTperforminDaVinciResolve?

...and another that contained the 1080TI... I became convinced that there simply wasn't enough of a performance difference, in the DaVinci Resolve video editor mind you... for me to care about anything beyond an RTX 2060 Super. (Literally 1fps difference between that and an RTX 2070 super.)

But then I saw their updated chart (up top) with the 3080... and that gave me pause.

Not because of the huge performance increase so much (though I'm sure that is a very nice thing...)

...but because of the PRICE!

I don't think I can swing an RTX 2060 Super... or even a used 1080TI... for anything under 350. That's just the reality. And if I pay that much for one now... the value for that card is just going to RAPIDLY decline as soon as the 3080 is more widely available.

This places me in a weird dilemma as I have projects to edit NOW...

So the most logical thing (which simultaneously strikes me as insane) would seem to be spending twice as much as I was looking to spend and getting the 3080. Or just scraping the bottom if ebay for a used 1080ti and holding onto that until the 3060s come out. (I mean... how low could a 1080ti POSSIBLY go for on eBay?)

International Superstar TeamRainless would normally just get the 3080... but with also the release... next month mind you... of both the PS5 and XBox Series X... this is shaping up to be an unusually expensive year... So I'm trying to save a little money wherever I can.

What say you?